1 The Origin of Lyapunov Functions

The concept of the Lyapunov function traces its origins back to 1892, introduced by the Russian mathematician Aleksandr Lyapunov in his doctoral thesis, The General Problem of the Stability of Motion.

Lyapunov was studying the stability of complex, non-linear dynamical systems (such as planetary orbits or fluid dynamics). The traditional approach to testing stability involved explicitly solving the system’s differential equations—a task that is often mathematically impossible.

Lyapunov proposed a revolutionary workaround, now known as Lyapunov’s Direct Method. He realized that you do not need to solve the equations to prove a system is stable. Instead, if you can define a scalar “energy-like” function for the system that is strictly positive when the system is disturbed, and you can prove that this energy strictly decreases over time, then the system must eventually settle into a stable equilibrium.

Analogy: Imagine a marble rolling inside a bowl. You do not need to calculate the exact, complex trajectory of the marble to know it will eventually stop at the bottom. You simply observe that friction causes the marble’s mechanical energy to constantly decrease. The mechanical energy serves as a natural Lyapunov function.

2 Core Concepts and Mathematical Definition

In a general dynamical system with a state vector $x(t)$, a Lyapunov function $L(x)$ is a scalar function used to measure the “magnitude” of the state.

Definition (Lyapunov Function):

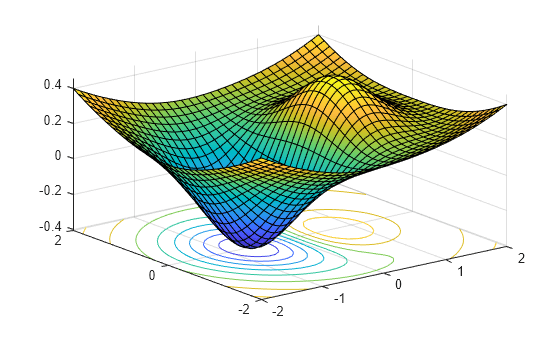

A function $L(x)$ is considered a candidate Lyapunov function if it satisfies:

- Positive Definiteness: $L(x) > 0$ for all $x \neq 0$, and $L(0) = 0$.

- Continuous Differentiability: It is smooth and continuous.

Theorem (Lyapunov Stability):

If there exists a candidate Lyapunov function $L(x)$ such that its time derivative (or drift) is negative semi-definite, i.e., $\frac{dL(x)}{dt} \le 0$ for all $x$, the origin is a stable equilibrium. If the drift is strictly negative, $\frac{dL(x)}{dt} < 0$, the system is asymptotically stable (it will return to the origin).

3 Transitioning to Stochastic Network Optimization

While originally designed for physics and differential equations, Lyapunov theory experienced a massive revival in the late 20th and early 21st centuries for controlling discrete-time computer and communication networks, largely pioneered by researchers like Michael Neely.

In network theory, we are not looking at physical energy. Instead, the “system state” $Q(t)$ typically represents the backlogs (number of unserved jobs, packets, or tokens) in a network of queues.

The Quadratic Lyapunov Function

In stochastic networks, we define a discrete-time Lyapunov function to measure the “congestion” or “queueing penalty” of the network. The most common choice is the quadratic Lyapunov function:

$$L(Q(t)) = \frac{1}{2} \sum_{i} Q_i(t)^2$$

This function maps a multi-dimensional queue state to a single non-negative scalar. It disproportionately heavily penalizes large queues due to the square term.

Lyapunov Drift

Because network arrivals and server capacities are often random variables, we look at the expected change in the Lyapunov function from one time slot to the next, given the current state. This is called the Lyapunov Drift, denoted as $\Delta L(t)$:

$$\Delta L(t) = \mathbb{E}[L(Q(t+1)) – L(Q(t)) \mid Q(t)]$$

Key Principle: By designing a routing and scheduling algorithm that minimizes $\Delta L(t)$ at every time step $t$, we greedily push the network towards an empty state (stability), preventing queues from growing to infinity.

4 The Drift-Plus-Penalty Framework

In modern resource allocation (like cloud computing, token scheduling, or data routing), simply keeping the queues stable is not enough. We usually want to optimize a time-average objective—such as minimizing power consumption, minimizing processing latency, or maximizing a utility metric—while subject to queue stability.

Let $p(t)$ be a penalty incurred at time $t$ (e.g., energy cost, or the negative of a utility function $-U(t)$).

Instead of just minimizing the drift $\Delta L(t)$, the general application in stochastic optimization uses the Drift-Plus-Penalty objective. The control algorithm makes scheduling decisions at each time step to minimize the following bound:

$$\text{Minimize: } \Delta L(t) + V \cdot \mathbb{E}[p(t) \mid Q(t)]$$

Understanding the Parameter $V$:

- $V$ is a non-negative control parameter that dictates the trade-off between network stability and the optimization objective.

- If $V = 0$: The system ignores the penalty $p(t)$ and focuses entirely on minimizing queue drift $\Delta L(t)$. This results in the lowest possible queue delays but sub-optimal utility/costs.

- If $V$ is large: The system prioritizes minimizing the penalty $p(t)$ (or maximizing utility). However, because less emphasis is placed on $\Delta L(t)$, the queue lengths $Q(t)$ will grow larger before the drift term becomes dominant enough to force the system to clear them.

This framework is incredibly powerful because it requires no prior knowledge of the statistical arrival rates or channel conditions; it makes optimal routing, queuing, and processing decisions purely based on the current queue backlogs $Q(t)$ and current penalties.

Leave a Reply

You must be logged in to post a comment.